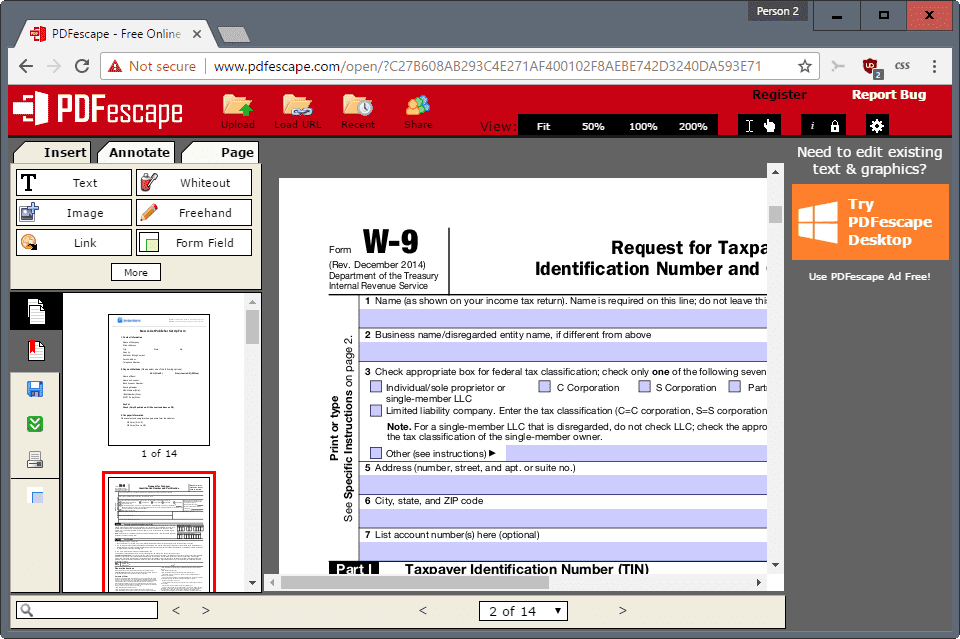

about : Upload

Drag and drop your PDF or image, or select it manually from your device via the dashboard. You can also connect to our API or document processing pipeline through Dropbox, Google Drive, Amazon S3, or Microsoft OneDrive.

Verify in Seconds

Our system instantly analyzes the document using advanced AI to detect fraud. It examines metadata, text structure, embedded signatures, and potential manipulation.

Get Results

Receive a detailed report on the document's authenticity—directly in the dashboard or via webhook. See exactly what was checked and why, with full transparency.

How Fake PDFs Are Created and Why They're Difficult to Detect

Understanding how fraudulent PDFs are produced is the first step toward reliable detection. Sophisticated attackers use a variety of methods, from simple text edits to deep content insertion. Common techniques include copying pages from legitimate documents and editing text with standard PDF editors, replacing or overlaying images, altering embedded fonts, and injecting invisible objects or form fields. Attackers also exploit scanned images by running the image through editing software and then re-embedding it as a new page, preserving the visual appearance while changing critical details like dates, amounts, or names.

Part of the challenge comes from the layered structure of a typical PDF. A single file can contain raster images, vector graphics, embedded fonts, metadata, annotations, and scripts. A forgery might tamper with only one layer—such as the visual raster layer—while leaving other layers intact, creating the illusion of authenticity. Meanwhile, legitimate edits by different software can also alter structural elements, creating false positives for naive detectors. Attackers increasingly leverage template-based forgeries and automated tools that retain original metadata to avoid detection.

To stay ahead, detection must go beyond visual inspection. Analysis of creation tools, modification timestamps, embedded font discrepancies, and inconsistencies between image pixels and OCRed text can reveal subtle signs of tampering. Patterns like duplicated page structures, mismatched language encodings, or suspicious use of annotation objects can all indicate manipulation. Emphasizing multiple cross-checks—structural, visual, and cryptographic—reduces the risk of missing sophisticated forgeries.

Technical Methods to Verify Authenticity: Metadata, Signatures, and Content Analysis

Robust PDF verification combines several technical approaches. Start with a metadata audit: examine creation and modification timestamps, producer and creator fields, and software identifiers. Inconsistencies such as a modification date earlier than the creation date, or a producer field indicating a consumer-grade editor on a document purportedly generated by a corporate system, are red flags. Metadata can be intentionally stripped or forged, so it must be validated against other document evidence.

Digital signatures and certificate chains provide cryptographic assurance when implemented correctly. Verifying an embedded signature involves checking the certificate issuer, revocation status, and whether the document content matches the bytes covered by the signature. Unsigned documents can still be evaluated via content hashing, comparing the file hash with known-good versions or records from a secure repository. When signatures are present but appear invalid, deeper inspection often reveals incremental modifications after signing or signature placeholder tampering.

Content-level analysis augments cryptographic checks. Optical character recognition (OCR) can convert scanned pages into searchable text; discrepancies between OCR results and selectable text layers indicate layering or overlay tricks. Examination of embedded fonts and glyph mappings often reveals substituted characters or mismatched font metrics. Image-level forensics—error level analysis, histogram comparisons, and JPEG quantization table analysis—can expose splicing or recompression artifacts. Structural parsing of the PDF object tree, cross-reference tables, and stream compression methods yields more clues about edits and toolchains used to produce the file.

Real-World Examples and a Practical Workflow for Rapid Detection

Practical detection workflows combine automated tooling with human review. Consider an altered invoice that appears legitimate: visual inspection shows company letterhead and a plausible total, but an automated pipeline flags a mismatch in the metadata producer. OCR reveals that the invoice number in the visual image does not match the embedded text layer, and image forensics show recompression artifacts localized around the amount field. This layered evidence supports a finding of manipulation and warrants follow-up with the submitting party.

Another common scenario involves forged academic certificates. Attackers may scan a genuine diploma, replace the recipient name with a new image layer, and re-embed the file while preserving original issuance dates in metadata. Detection systems that analyze font metrics and glyph shapes can detect the substitution; signature verification routines can flag missing or invalid certification seals. Integration with institutional records—checking document hashes against a secure ledger or contacting the issuing body—provides additional certainty.

A streamlined operational workflow looks like this: Upload the document to an automated analyzer, run parallel checks for metadata anomalies, signature validation, OCR vs. text-layer comparison, and image forensics, then compile a human-readable report that highlights suspicious items. The process described earlier as about: Upload, Verify in Seconds, Get Results maps directly onto modern detection services and supports fast decision-making. For teams looking for an out-of-the-box solution, tools that let users detect fake pdf automatically, integrate via API or cloud storage, and return webhook notifications are especially valuable for high-volume environments such as finance, HR, and admissions.